The Speed Problem That Changed Broadcast Strategy

Sports broadcasting used to operate on a simple timeline: capture the game, edit the highlights, distribute them within a few hours. That model worked when television was the only distribution channel and audiences had no alternative. Today, the window between a live moment and the first published highlight determines whether a broadcaster captures the audience or loses it to a fan with a phone.

Social platforms have compressed the content cycle to seconds. A goal clip that reaches Instagram within 60 seconds earns significantly more impressions than one posted 10 minutes later. For broadcasters holding expensive rights deals, this speed gap has real financial consequences. Rights fees only generate returns when the content reaches audiences at the moments those audiences are paying attention. Publish too late and the moment has already been shared, discussed and forgotten by the time the official clip appears.

The traditional solution was to hire more editors and add more workstations. But this scales linearly: double the output means double the headcount, double the cost. A broadcaster covering 15 simultaneous matches on a Champions League evening would need 15 dedicated editing stations staffed in real time. For most organizations, that is not economically viable.

This is why the broadcast industry has shifted toward AI-powered highlight automation. Not as an experiment or a future roadmap item, but as operational infrastructure that is already running inside production workflows. As we detailed in our complete guide to AI sports highlights, the technology has matured from proof-of-concept to production-grade within the last two years.

How AI Highlight Pipelines Work Inside a Broadcast Operation

Understanding the technical workflow matters because it explains why AI does not simply replace editors. It removes the mechanical bottleneck so editors can focus on editorial judgment.

Feed Ingestion at Scale

A broadcast operation managing multiple live feeds needs a platform that handles RTMP, HLS and SRT streams simultaneously without manual configuration per feed. At Zentag AI, ingest is zero-configuration: connect the feed and the system starts processing immediately. For a broadcaster managing rights across football, basketball, tennis and handball, this eliminates the per-sport setup overhead that would otherwise require dedicated technical staff for each feed.

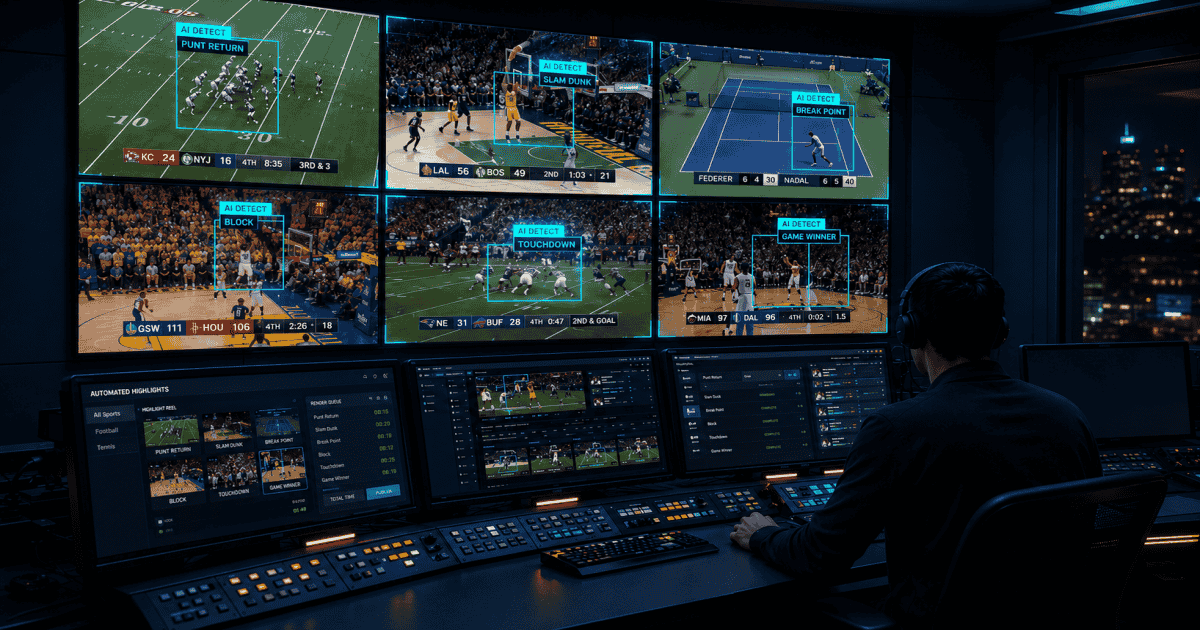

Multi-Dimensional Moment Detection

The core advantage of AI over manual monitoring is parallel analysis. While a human editor watches one feed and relies on instinct and experience, AI analyzes every frame across multiple signals simultaneously. Computer vision tracks player actions, ball position and celebration patterns. Audio analysis detects crowd noise spikes and commentator excitement. Game context weighs each moment against match state. An 88th-minute equalizer scores higher than a comfortable third goal because the editorial significance is fundamentally different.

This multi-signal approach is what separates broadcast-grade AI from basic clipping tools that trigger on scoreboard changes alone. A scoreboard trigger catches goals but misses dramatic saves, controversial decisions, emotional celebrations and the near-misses that make compelling viewing. The best moments in sports are often the ones that almost happened.

Automated Packaging and Multi-Format Output

Detection is only half the workflow. Every detected moment needs to become a finished, branded clip before it has any value. The AI applies configured branding, overlays, lower thirds and score bugs automatically. For broadcasters publishing across television, web and social simultaneously, AI Reframe technology converts horizontal broadcast footage into vertical 9:16 formats for Instagram Reels, TikTok and YouTube Shorts in a single pass. No re-editing, no separate workflow, no additional staff.

This multi-format output capability matters more than most broadcasters initially realize. The same highlight needs to exist in at least three aspect ratios (16:9, 9:16, 1:1) to cover the primary distribution channels. Without automation, that means three editing passes per clip. With 20 highlights per match and 8 matches on a weekend, the multiplication adds up to 480 individual edit tasks. AI reduces that to zero manual effort.

Three Broadcast Workflows AI Has Transformed

Live Social Clipping During the Match

The highest-value use case for broadcasters is publishing highlights while the game is still in progress. Every significant moment (a goal, a red card, a controversial VAR decision) generates a finished clip within seconds, ready for social distribution. This is what turns a rights-holding broadcaster from a delayed content publisher into a real-time media operation.

The operational difference is significant. Without AI, a social media team watches the feed, communicates with an editor, waits for the clip to be cut, reviews it, adds branding and publishes. That chain takes 5 to 15 minutes per clip. With an automated pipeline, the social team receives a branded, formatted clip in their publishing queue within seconds of the on-field moment. Their role shifts from managing a bottleneck to curating and distributing content that already exists.

Smart Live Recaps for Late-Tuning Audiences

A challenge every OTT platform faces is the viewer who joins a live stream 20 minutes in. Without context, they are less engaged and more likely to drop off. Smart Live Recaps solve this by generating a continuously updated narrative summary of the match so far. A viewer joining at halftime sees the key moments, turning points and current state of play within seconds. No manual production required.

For OTT platforms measuring engagement metrics like average watch time and session depth, this capability directly impacts the numbers. A viewer who catches up quickly watches longer. A viewer who is confused about what happened changes the channel.

Post-Match Packages at Scale

Even traditional post-match highlight packages benefit from AI automation. Instead of an editor starting with raw footage and building a package from scratch, they start with AI-generated clips already cut, scored and organized by significance. The editor's role becomes curatorial: selecting the best moments, adjusting the narrative arc, adding commentary where needed. Rather than mechanical work, it becomes editorial. A package that took 45 minutes to assemble from scratch takes 10 minutes when the raw materials are already prepared.

What Broadcasters Get Wrong When Evaluating AI Highlights

Having worked with broadcast teams across multiple sports and markets, certain evaluation mistakes appear repeatedly.

Evaluating on demo footage instead of their own content. Every vendor's demo reel looks impressive. The real test is how the system performs on your specific sports, your camera angles, your audio environment and your branding requirements. A system optimized for football may struggle with tennis or handball because the visual and audio patterns are fundamentally different. Platforms like Zentag AI support 50+ sports because the AI is architected to handle this variation rather than being hard-coded for a single sport.

Ignoring the speed of the pipeline. If the AI detects a moment in 2 seconds but takes 3 minutes to render and export the clip, the speed advantage disappears. The entire pipeline (detection, packaging, branding, format conversion and delivery) needs to operate in seconds, not minutes. Processing 4K footage at 10x speed is meaningfully different from processing it at 1.5x.

Treating it as a replacement for editorial staff. AI handles the mechanical work: watching every frame, detecting moments, cutting clips, applying branding, converting formats. What it does not do is make editorial decisions about narrative, tone or which moments to prioritize for a specific audience. The broadcasters getting the most value from AI are those who use it to eliminate the production bottleneck so their editorial teams can focus entirely on storytelling.

Overlooking archive value. Live content is the obvious use case, but most broadcasters sit on years of archived footage that has never been properly catalogued or clipped. AI-powered archive management can process historical content at scale, turning dormant footage libraries into searchable, clippable assets that generate ongoing value.

The Economics of Automated Broadcast Highlights

The financial case for AI highlights is straightforward when you map the current manual workflow against an automated one.

Consider a broadcaster covering a domestic football league with 9 matches per matchday, 34 matchdays per season. Manual highlight production requires approximately 9 editors working simultaneously on match days (one per match), each producing 8-12 clips over a 2-hour post-production window. At an average cost of $60/hour including benefits and tools, that is $1,080 per matchday in editing labor alone. Across a 34-matchday season: $36,720 for a single competition, producing roughly 3,000 clips total.

An AI-powered pipeline covering the same 9 matches simultaneously produces 15-30 clips per match (significantly higher volume), delivers them in real time rather than post-match, handles all format conversions automatically and requires one or two operators to monitor rather than nine editors to produce. The clips are branded, formatted and distribution-ready without post-production.

The saving is not just in headcount. It is in the content that was never created under the manual model. Those 15 near-misses and dramatic saves that the editor skipped because there was not enough time? Each one is a social media post that drives engagement, grows audiences and increases the value of the rights the broadcaster already holds.

Where Broadcast AI Highlights Are Heading

The current generation of AI highlights solves the detection-and-packaging problem. The next wave is about intelligence and personalization.

Personalized highlight feeds are the clearest near-term development. Rather than one highlight package for all audiences, AI can generate different packages for different viewer segments: a tactical analysis cut for core fans, a drama-focused cut for casual viewers, a player-specific cut for fantasy sports participants. The underlying detection is the same; the editorial assembly becomes audience-aware.

Deeper integration with second-screen experiences is another trajectory. As broadcasters build companion apps and interactive features, AI-generated highlights become the content layer that powers real-time notifications, in-app clip sharing and social engagement within the broadcast ecosystem rather than outside it.

For broadcasters evaluating platforms today, the strategic question is not whether to adopt AI highlights. That decision is effectively made. The question is whether the platform they choose can scale from current requirements to these emerging use cases without requiring a wholesale infrastructure change. Building on a platform designed for real-time multi-sport automation, like Zentag AI, means the transition from current to next-generation workflows happens through configuration rather than migration.